At the end of last year, I attended a workshop run by PROBabLE Futures on AI in Law Enforcement: Lessons from the Past, Priorities for the Future (11th December 2025). My takeaway was that, whilst technology rapidly evolves, the fundamental challenges of successful public facing technology deployments remain strikingly consistent.

The speakers traced the evolution of both historical and modern policing technologies, drawing clear parallels with today’s approach to adopting AI. One example stood out as a particularly compelling case study that achieved not just technical adoption, but public acceptance - the breathalyser.

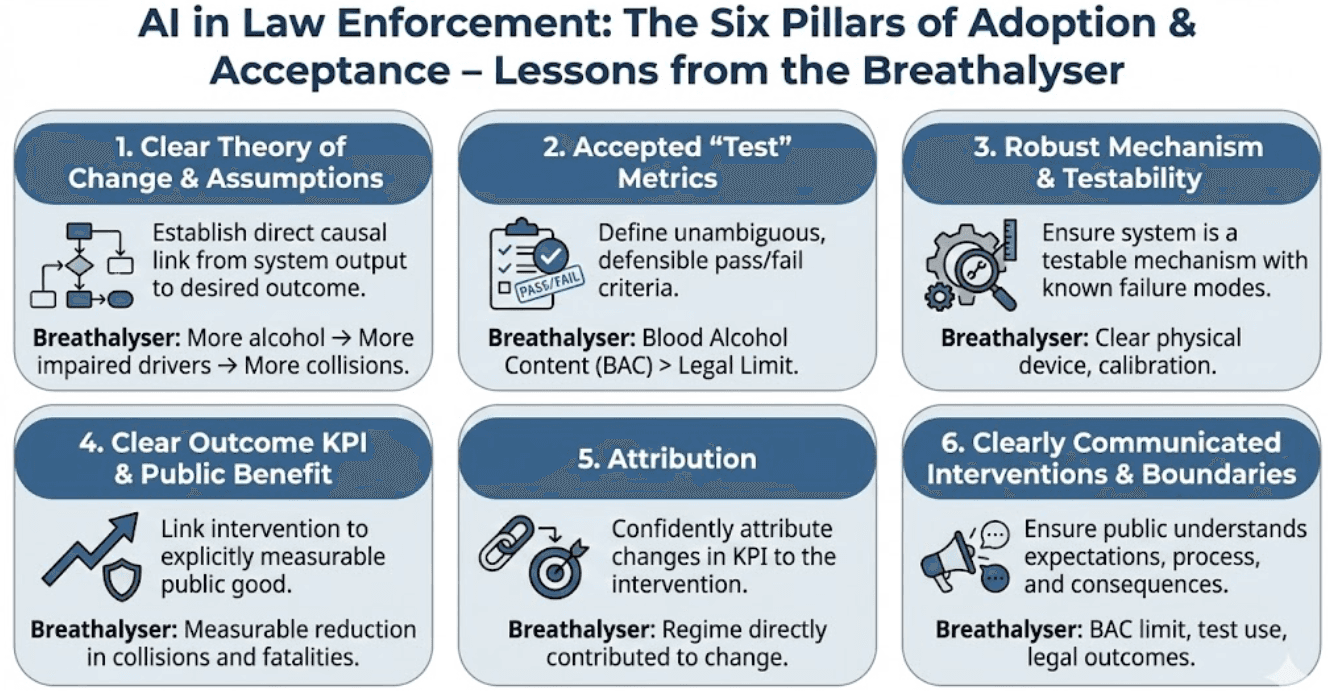

Whilst not without controversy, the breathalyser ultimately gained widespread acceptance because, in my view, it successfully satisfied six core categories that underpin both public and organisational buy-in of a new, enforced technology.

The Six Pillars of Adoption and Acceptance:

1. Clear Theory of Change & Assumptions

The breathalyser case had a well-defined theory of change, with a clear causal chain from intervention to outcome, and metrics explicitly chosen to test each step of that chain: more alcohol in the bloodstream > more impaired drivers > more road collisions. AI Implication: You must have a direct causal link between the system’s output and the desired outcome/KPI.

2. Accepted “Test” Metrics

Pass/fail criteria are precisely defined (e.g. Blood Alcohol Content > legal limit). They are unambiguous and defensible. AI Implication: AI systems and their responses require clear, accepted definitions and thresholds of success and failure.

3. Robust Mechanism & Testability

The breathalyser is a testable device with clear physical mechanisms and known failure modes (e.g. calibration). We know when it is working and when it is compromised. AI implication: The AI model and data pipeline must be treated as a testable mechanism. You must define and detect its failure modes (bias, drift, adversarial noise) to ensure it is functioning as intended.

4. Clear Outcome KPI & Public Benefit

KPI: measurable reduction in collisions and fatalities. The intervention has an obvious public good. AI implication: AI must be linked to an explicitly measurable public good - the reason the public will tolerate the intervention.

5. Attribution

If the KPI changes, we can say with confidence that the breathalyser regime contributed to that change. AI Implication: AI systems must be piloted, monitored and impacts measured with the same level of rigour.

6. Clearly Communicated Interventions, Boundaries, and Operating Models

The public understands: (a) the expectation (BAC limit), (b) the process (the test will be used), and (c) the consequences. Consent is implicit in choosing to obtain a driving licence and use the roads. AI Implication: Everyone affected - police, public, practitioners - must understand rules of engagement, boundaries, and consequences through clear, accessible and well-broadcasted governance and workflow documentation.

These last two pillars are particularly pertinent for public acceptance. In the breathalyser’s case, the public can see a clear benefit, understand attribution, and know exactly what is expected of them. It is a simple, transparent system where the risk/benefit trade-off is overwhelmingly weighted toward benefit - and crucially, one where the mechanism is easy to explain.

This does however make the breathalyser case unusually “clean” compared with most AI systems, which tend to involve complex mechanisms and far murkier cause-effect chains...

The Dual Challenge for AI Deployment

As far as I see it, the struggle to move AI past the proof-of-concept stage comes down to two related hurdles:

Lack of Clarity: Most AI use cases lack clarity across these six pillars. Without consensus on outcomes, metrics, or attribution, stakeholders cannot agree and it becomes impossible to demonstrate real-world value. These pillars are hard to define for complex AI systems.

Risk vs Benefit Trade-Off: AI introduces new risks or exacerbates old ones. It’s not just about measuring positive impact; we must rigorously test for potential increases in harm and be able to attribute those outcomes back to the system. It’s not always clear that the benefits do outweigh the risks.

Together, these hurdles leave organisations at an impasse.

What This Means for AI Deployment

I believe that any organisation serious about deploying AI systems in sensitive domains should prioritise use cases based on where they sit in the following matrix:

The six pillars above can be defined with the highest possible clarity - this is a critical dimension of use-case feasibility alongside technical and operational feasibility.

The anticipated benefits and/or potential for harm reduction (backed by a strong theory of change) clearly outweigh the risks and potential for new harm introduction.

If a use case sits in the quadrant of high ambiguity and high potential for harm, it should probably be shelved - even where the hypothetical upside appears transformational. In such cases, there is likely a more impactful alternative for change that is better understood, already proven, and potentially underfunded.

The real challenge is not that this ambiguity is impossible to navigate, but that too few organisations attempt to define these pillars with genuine rigour. It is difficult to do this well, takes more time than we are conditioned to accept, and runs counter to a culture that has spent a decade telling us to "move fast and break things." High-stakes public deployments demand a different standard.

The breathalyser model provides a fundamental blueprint for this standard. Its success was rooted in the clarity of its impact, its measurement, and its communication. Those building and deploying AI systems should strive for that same level of rigor. A grounded use case, built on these pillars, dramatically increases the likelihood of successful outcomes and public acceptance. Whilst ‘perfect’ definitions of the six pillars aren’t possible for complex AI systems, but we must strive for as much clarity as possible to ensure the system is demonstrably worth the trade-off.

I work at Advai, where we specialise in the testing and evaluation of AI systems. If your organisation is struggling to cut through ambiguity and needs clarity on the benefits, risks and evaluation of your AI deployments, please reach out.